Written by ContentPowered.com

Written by ContentPowered.com

Facebook removes a lot of content on their platform, for a lot of different reasons. Sometimes those reasons are clear: they’re hateful, threatening, or otherwise awful content. Sometimes the reasons are less clear, especially to the person who posted the content.

Before we get into the specific reasons why Facebook might remove a post, and what you can do about it, I want to make a few points clear.

Facebook removing your posts is not a violation of free speech. “Free speech” as it is protected in the United States of America is a vastly misunderstood concept. The American First Amendment Right to Free Speech is simply the right to speak up and criticize the government. It protects citizens against repercussions from the government, be it federal, state, or local. You can call President Trump anything you like, and the government will not send an FBI agent to your house to shut you up. You cannot be punished by the government for your speech.

Facebook, you may notice, is not a government agency or entity. It may have a lot of influence with the government by dint of being an immensely large corporation with billions of dollars at its disposal, but it is not itself government.

Facebook, as a private entity, is allowed to implement as much censorship as it wants. If Facebook wanted to word-filter the word “email” and ban anyone who used it, they could do so, and it’s not in any way illegal. Anyone who has their content removed from Facebook and cries about censorship and free speech violations has a fundamental misunderstanding of what free speech is.

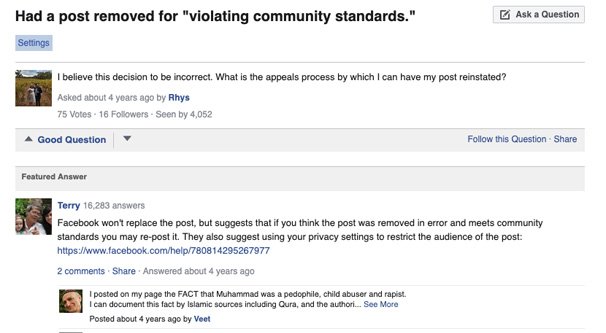

Just because you believe something to be true does not mean it is not hateful. One of the biggest things that causes Facebook to remove content and suspend users is hateful content. Whether this is racism, sexism, or anti-religious rhetoric (as seen in this four year old comment), if it is hateful, it is a violation of Facebook’s policies. Again, they are perfectly in the right to remove that content as they see fit.

In the example above, this guy “believes” in certain “facts” about the Islamic religion and tradition. However, his “facts” serve only to brace his hateful speech against that religious group. Whether or not his sources are legitimate, he’s using them to justify hate, and that’s a violation of Facebook’s policies.

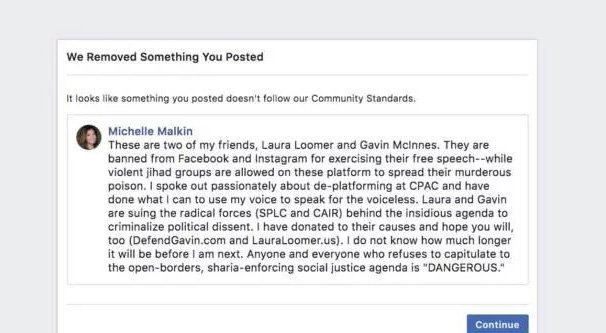

Now, we all know that Facebook is not very good at their job when it comes to edge cases of hate speech and other community violations. They’ve removed fine art because it contains nudity while allowing pornographic accounts. They’ve removed the “hateful imagery” of a book cover when the book is about the damage of racism, while allowing racism in thousands of Facebook Groups across the platform. They rail against fake news, but refuse to remove obviously faked videos.

As with any “fairness” argument, of course, just because someone else gets away with it does not mean it’s allowed. The fact that some people in the world sells drugs does not make drug distribution legal for you. All it means is that Facebook needs to do a better job, between their automatic detection, their manual reviewers, and their community standards.

So, what kinds of content can get your posts removed and your account suspended?

The Facebook Community Guidelines

Facebook maintains a document of community guidelines, which is meant to determine what is and isn’t allowed on their platform. The guidelines aim to be fair for everyone on their platform, keeping in mind various regional governments around the world.

In addition to the public community guidelines, Facebook maintains an internal document that guides their human moderators. See, when content is reported, Facebook scans it with an automatic system. If it’s obviously in violation of their policies – such as using racial slurs without specific extenuating factors – the automatic system takes action. In fringe cases, and for spot-checking the algorithms, some selection of the reported content is sent to human moderators. You can read the link above – the manual reviewers one – to read how horrible their working conditions are.

The human moderators are meant to keep up with this ever-changing document, which his a much more in-depth version of the community standards, that is constantly being adjusted. As new kinds of hate speech and new slurs are invented, Facebook has to adapt.

Facebook also maintains two other official documents, the violation of which can get your account suspended. These are the terms of service and the data policy.

- The Facebook Terms of Service is a legal document you agree to when you register a Facebook account. It governs many things, from your control over your privacy to disclosures on how Facebook is allowed to use your data. Violation of the terms of service is rare, since it typically involves scam apps and other data privacy violations. Chances are, you aren’t going to be banned for a violation of the terms of service without knowing exactly what you’re doing. We’re talking Cambridge Analytica level violations here. The only exceptions are if you’re in a banned group, that is, people who are under the age of 13, people who are convicted sex offenders, and people who are prohibited by law from accessing Facebook or the internet in general.

- The Facebook Data Policy is another official legal document that governs Facebook more than it governs you. It’s their disclosure of how they harvest information and what permissions you grant them to use it, as well as how you can control their usage. It includes statements of your rights on how to pursue legal action against people violating your data privacy, and what options you have if you believe Facebook is violating it. In general, nothing in that document will get you banned without some extreme action.

- The Facebook Community Standards are the document you need to be concerned with. This is the document that guides what you can and cannot post on Facebook and how you’re able to use content you find on Facebook. It covers everything from pornography to hate speech to intellectual property. Now, this document is constantly evolving, so it’s worth reading through every few months to see what’s new and what has changed. I highly recommend reading it at least once. That said, I’m going to give you a rough summary of what it includes here.

Facebook’s intended guiding principles are safety for its audience and community, giving a voice to diverse viewpoints, and providing an equitable platform for a diverse audience. They try to avoid giving a platform to hate speech, while making sure that minority groups are given their platform and are allowed to speak about their experiences without being banned.

This is pretty tricky. Given a world where sites like Facebook, YouTube, and Twitter all struggle with ways to filter content, it’s no surprise that the rules are sweeping and inconsistent. If you ban offensive words, anyone using them in the context of an academic discussion or a personal anecdote will be banned as well, despite the completely different sentiment. That’s why so much slips through the cracks.

In any case, here’s a rundown of broad categories that are restricted or banned on Facebook. The first category relates to violence and criminal behavior.

- Anything that threatens a person or calls for violence is a violation. Fake threats and jokes are difficult to discern, and Facebook will often err on the side of “better safe than sorry.” Credible threats will result in more than just a ban; Facebook works with law enforcement for these kinds of posts.

- Anything that promotes or endorses a violent organization or organized crime is a violation. You can’t support a terrorist organization, you can’t promote human trafficking, and so on. A post that praises the leader of the KKK for doing a good job, for example, will be removed.

- Anything that promotes or glorifies violent crime, theft, and fraud is a violation. Obviously, news about such actions is allowed, but if you’re promoting it and soliciting copycats, it’s a violation.

- Anything that attempts to organize or coordinate harm, be it property theft or a hate crime or anything in between, is a violation.

- Anything that promotes the sale or trafficking of regulated goods – drugs, firearms, endangered species, and so on – is a violation. Facebook does not want to be a black market.

The second section relates to the safety of people in general and Facebook users in specific.

- Anything that encourages or depicts graphically self-harm or suicide is a violation. Experts repeatedly suggest that allowing the depiction or glorification of self-harm encourages more self-harm, so Facebook tries to avoid being responsible for such actions. This goes for everything from a commenter posting “kill yourself” to someone they hate, to someone attempting to livestream their own suicide.

- Anything involving child nudity or child sexual exploitation is a violation, for obvious reasons.

- Anything involving the sexual exploitation of adults is a violation. This is a tricky line to draw, as there is an ongoing argument about the validity of sex work, but Facebook would prefer not to become a marketplace for sex.

- Anything involving bullying or harassment is a violation. This is a very tricky category and includes different standards for different types of people. Harassment against minorities, children, and protected groups is taken more seriously than harassment of public figures, since public figures expect a certain level of disagreement, while less public individuals may find it to be a more credible threat. This is a line that constantly changes, and sometimes catches light-hearted ribbing as bullying.

- Anything that is an invasion of privacy, sharing confidential or personal information, doxing, and other kinds of actions is a violation. This can also be considered attempting to incite violence in some contexts.

The third category talks about objectionable forms of content. This is also where a lot of the variable and evolving policies can be found.

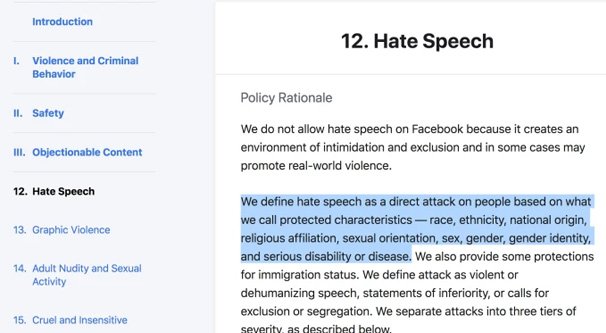

- Anything that involves hate speech is a violation. Hate speech is anything that can be construed as a direct attack on a protected class, be it based on race, religion, sexuality, national origin, gender, or other characteristics. This is such a tricky conversation that Facebook even has a blog category discussing it.

- Anything that depicts or glorifies violence or includes graphic content is a violation. Facebook doesn’t want people on their platform exposed to gore and needless violence, and while some content can be allowed in limited circumstances, it is given a disclaimer label and hidden behind a user action required to see it.

- Anything including adult nudity and sex is a violation. Sexual freedom and the discussion of sexuality is fine, but actually posting photos or videos of sex acts is not. There’s a lot of nuance here.

- Anything including sexual solicitation is a violation. Again, Facebook does not want to be an escort marketplace.

- Anything that is cruel and insensitive is a violation. This is also pretty self-explanatory.

The next section has to do with integrity and authenticity.

Facebook wants to be a platform for honest people, and as such will take action against dishonesty as much as possible.

- Spam is a violation. False advertising, fraud, phishing, and so on are all dangerous to the community and will be removed.

- Misrepresentation is a violation. Pretending to be someone you aren’t can get your account removed. This covers everything from someone running a personal profile for their cat to someone pretending to be a politician.

- Fake news is a violation. This is, again, a very tricky category, and is an ongoing discussion that needs to be refined.

The fifth section is about intellectual property.

- Facebook endeavors to take intellectual property rights – copyright, trademark, and so on – seriously. Stealing content or representing content you don’t own as your own is a violation. This can be anything from plagiarizing a book to posting artwork without credit.

Finally, the last section is about content requests that Facebook will comply with.

- If a user requests the removal of their own account, the memorialization or removal of the account of a deceased family member or person who they have executor over, or the account of an incapacitated person, Facebook will comply.

- If content deals with or is posted by a minor, or is requested to be removed by the government due to involvement of child abuse, or is requested to be removed by a legal guardian, Facebook will remove it.

So as you can see, there are a lot of different reasons why Facebook might remove content, might temporarily or permanently suspend an account, or otherwise take action to work with authorities to pursue legal action. Some are more common than others, but it’s worth knowing them all.

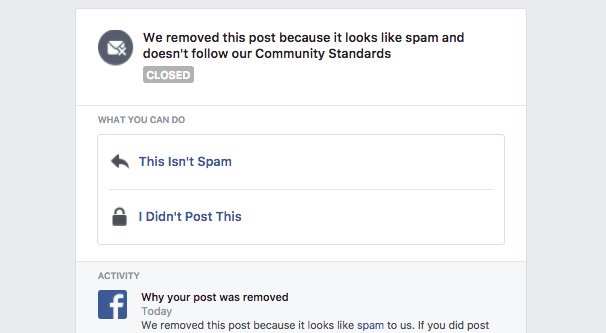

So what happens if your content is removed and you don’t think it’s in violation of one of the above policies? You can file an appeal with Facebook. You will need to identify what caused content to be removed and explain why that content is not actually in violation of a policy, and explain that to Facebook via a report.

Facebook is under no compulsion to restore content or unsuspend accounts that have violated their guidelines. An appeal may restore your account, but it may also just confirm the validity of a removal. It’s often best to simply draw back and look at why your content was removed, and think about what inherent biases may have led to that action.

My account was hacked and now I’m locked out by facebook

Hi John! I’m sorry to hear that – have you tried reaching out to them to see if you could get it back? If you still have the original email tied to the account, can you do a password reset?

I’ve recently had a rash of comments blocked that don’t violate any of these standards. I linked my partner to post I thought he’d appreciate, it was blocked. I responded to a friends post agreeing with the post and it got blocked. I sent a comment asking why my comment was blocked and it was blocked. I’m very confused.

So, tell me how is it that wishing my friend happy Anniversary goes against their community guidelines?! This is BS.

Hello, I repeatedly had several personal responses deleted tonight only due to not meeting Community Standards. They had no offensive content at all, from any point of view? I was simply responding to my friends post on my page. This has happened on 4 out of about 7 replies I’ve made tonight on posts on my page only. The replies deleted were me responding to a post from a good friend and talking about – how busy I’ve been as my husband needs surgery and I’ve been handling all the work on our farm and I’m tired. That’s it??? I am so totally in the dark here. Help?

I had a family photo deleted because they said it was spam. The picture add with a post was about my father who had just passed away. All pictures were family pictures we had taken a week earlier. I don’t understand it either. I appealed and nothing.

I had a family photo deleted because they said it was spam. The picture was with a post was about my father who had just passed away. All pictures were family pictures we had taken a week earlier. I don’t understand it either. I appealed and nothing.

So they blocked something. Dont know what, and no appeal option. I call bull on this not being censorship and first amendment violations. I want to report this to commity looking into Facebook, but they wont tell me why how or what I fif

yeah but FB did not remove a lot of posts which clearly and directly implies racism, etc. Somehow even after many reports. The post was not taken down. Also, a lot of foreign language posts got removed although the content is clean. Worst FB even punished the content creators.

Facebook was no help in removing my picture, which wasn’t public, and a post that lied about me. This was done on a page that was under a fake name. The particular person who did this has had several complaints about the same thing, but is still allowed to continue to do this.

Today, I commented on a NY Times article about Americans who are “confused” by all the impeachment information and they are tuning it out. It wrote: “Unfortunately, some Americans are intellectually lazy” Facebook sent me a notice that I was in violation. WHAT? I asked FB to reconsider and they rejected it a second time saying that I have violated rule of the community.

How can I tell if it was Facebook or a person who reported that I violated community rules?

I just realized I have 22 notices of violating community standards but 20 of them were reviewed and restored. My question is why can’t I see what posts they’re saying were violations?

On Facebook marketplace there are so many scams such as Amazon is hiring $50 an hour and a free laptop. EBT is giving children in school $750 etc. You report the profile and it gets a response of we’ve checked this out everything is fine. But if you post a comment calling people who fall for the scam stupid they remove it for community content